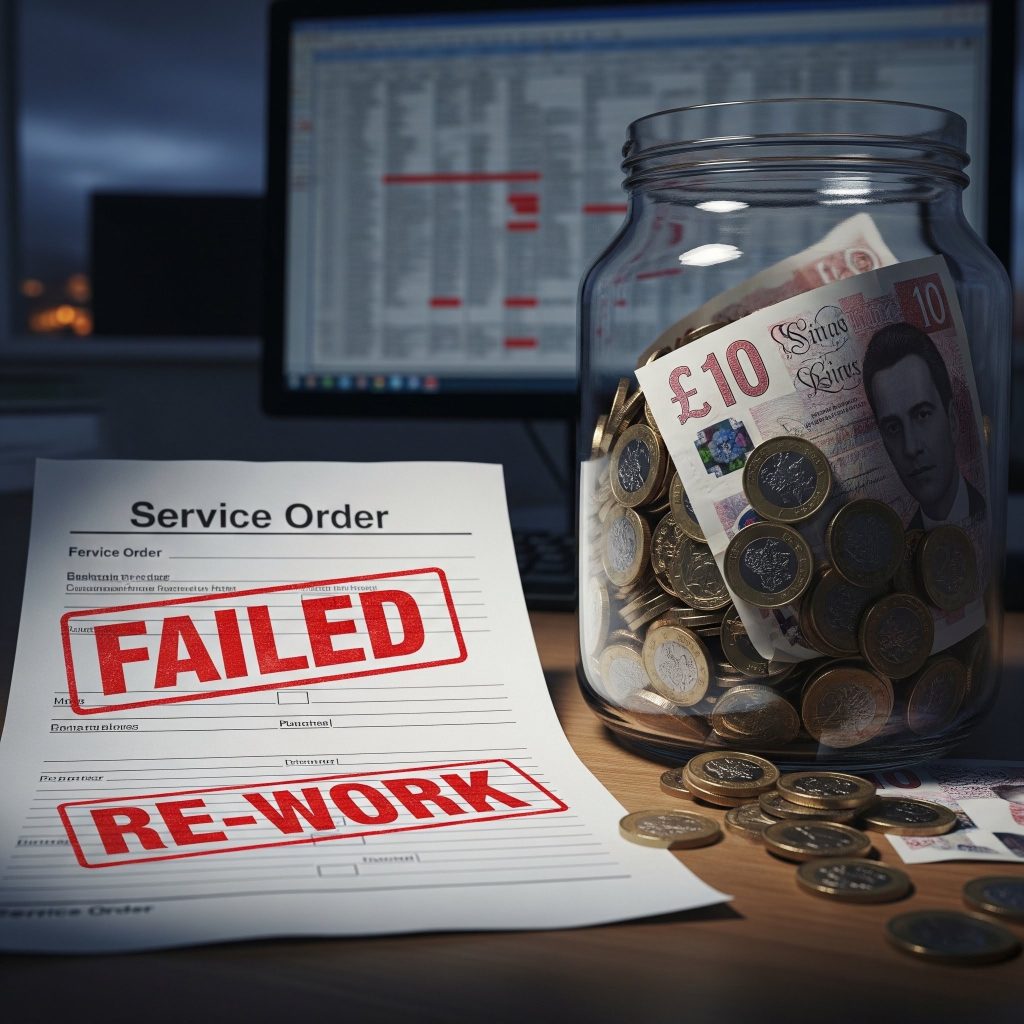

It happens on every copper withdrawal programme that isn’t managed properly. A sales order gets raised against a migrated address, an engineer is dispatched and they arrive to find nothing to connect. This is what that failure looks like from the inside — and what prevents it.

Picture the scene. An engineer drives forty minutes to a job. Rings the doorbell, introduces himself, goes around the back of the house to the telecom connection point. No active line. Checks the job notes. Checks the address. Calls the office. Turns out the exchange serving this street was withdrawn three months ago. The property is already on fibre. Somebody raised a copper broadband order against it anyway, the provisioning system accepted it and nobody noticed until this moment.

The engineer drives home. The customer waits another week. The job costs the operator around £200 in wasted time. And if this is happening dozens of times a week across a large withdrawal programme — which it is, at operators who don’t have the right controls in place — it adds up to something that seriously damages both the budget and the relationship with the regulator.

This piece is about that failure and the others like it. Not the technical challenge of laying fibre — that’s well understood — but the operational and systems challenge of managing the transition. What goes wrong, why it goes wrong and what an operator actually needs in place to run a withdrawal programme without it becoming a slow-motion crisis.

Running a programme in reverse

There’s something psychologically unusual about a withdrawal programme that catches operators off guard the first time they run one. Almost everything else in network operations is additive. You build things, extend things, connect things. The direction of travel is always forward.

Withdrawal is the opposite. You are systematically removing infrastructure that customers currently depend on, on a rolling timeline, while simultaneously replacing it with something better — and the entire operation depends on your systems knowing, in real time and at address level, exactly which properties are in which state of transition.

That sounds manageable until you’re dealing with 50,000 addresses across twelve exchange areas, each at a different point in the migration lifecycle, with multiple OLOs serving customers in the affected areas, under regulatory obligations that require you to prove notification was completed on time. At that scale, the complexity isn’t in the fibre. It’s in the data.

At scale, the complexity isn’t in the fibre. It’s in the data — knowing, at any moment, exactly which of 50,000 addresses is in which state of transition.

The six phases nobody warns you about

Most withdrawal programmes go through the same sequence of phases. The technical teams know this well. The OSS teams often find out about it later than they should.

Four things that actually go wrong

After running these programmes for a long time, the failure modes are fairly predictable. Not because operators are careless — they aren’t — but because the problems are structural.

The engineer with nothing to connect to

We started here because it’s the most visible failure, but it’s actually a symptom of a deeper problem: the order management system accepted a copper order for an address that no longer has copper. That means either the blocking wasn’t in place, the blocking wasn’t applied to all channels, or the blocking was applied but had a gap — perhaps the OLO’s ordering system wasn’t covered, or a particular product type slipped through.

The instinctive response is to brief the sales team. Don’t. Briefings fade. People change. Processes drift. The only reliable fix is to enforce the block in the system — before the order is created, not after it’s failed.

If you have wholesale partners serving customers in the withdrawal area, they need the same order blocks applied to their systems too. If you’re only blocking orders in your own BSS and your OLOs are still raising copper orders through their systems, you will have the exact same problem — just with an additional layer of complexity when it comes to unpicking who’s responsible for the failed engineer visit.

The inventory that didn’t match the ground

This one is quieter than the engineer failure — it doesn’t produce an immediate visible incident — but the consequences can be much more serious. If the inventory records are wrong, the programme is built on incorrect assumptions. The wrong customers get notified. The wrong equipment gets decommissioned. At its worst, a customer whose address was incorrectly mapped to a decommissioned ISAM loses their copper service before they’ve been migrated to fibre.

Nobody sets out to have inaccurate inventory. It accumulates through years of change management where the physical work happened but the records update didn’t. A capacity upgrade here, a routing change there, an equipment swap that was done under pressure with a plan to “update the records later.” Later never comes.

The only solution is to audit before the programme begins. Map every address in scope to the equipment serving it. Validate against physical records. Find the discrepancies and fix them. It’s slow, unglamorous work, and it always takes longer than anyone thinks it will. But it’s infinitely better than discovering the discrepancies mid-programme.

The OLO who found out from a failed order

In wholesale markets, the withdrawal programme has to work for your OLOs as well as for your own retail operation. OLOs have customers in the withdrawal area. They have their own systems. They have regulatory rights — including, in some jurisdictions, the right to object to the withdrawal timeline or to provide their own alternative to the host operator’s fibre product.

The operators who handle this badly are the ones who send an email to the OLO account manager, consider that notification done, and then wonder why the OLO’s systems are still raising copper orders six months later. The OLO account manager told someone in operations. Operations meant to update the BSS. It didn’t happen.

The operators who handle this well give OLOs a proper portal with real-time address-level status, automated notifications as individual addresses move through the withdrawal lifecycle, and order blocking that applies to OLO-originated orders just as it applies to the host operator’s own orders. The OLO doesn’t need to do anything. It just works.

The regulatory close-out that couldn’t be evidenced

When the regulator asks for evidence that notification was completed on time, what do you have? If the answer is “a spreadsheet and some email records,” you have a problem. Not necessarily an immediate one, but the kind that causes serious difficulty if anything about the programme is ever contested.

Regulatory audit trails need to be built in from day one. Who was notified, when, via what channel, what the response was, when each address transitioned between states, who authorised any exceptions. This isn’t something you can reconstruct after the fact from email histories and spreadsheet logs. The system needs to be creating these records automatically throughout the programme.

Regulatory audit trails need to be built in from day one. You cannot reconstruct them from email histories and spreadsheet logs after the programme ends.

The inventory problem deserves its own section

We’ve mentioned inventory accuracy twice already, but it’s worth being more specific about what “wrong inventory” actually looks like in practice — because the types of error matter.

Geographic misattribution is when addresses that fall within Exchange A’s catchment area are actually served by equipment in Exchange B. This happens more than you’d think in areas where exchange boundaries follow historical telephony geography rather than current network topology. If you define your withdrawal zone by exchange, these addresses get enrolled in the wrong programme.

Cabinet misattribution is when an address is recorded as being served by one cabinet but is actually served by another. Common after capacity upgrades, line rebalancing exercises, or any work that involved moving line cards between cabinets. The address record wasn’t updated because the work was done at night and the engineer had six more jobs to get to.

Service type errors are when an address is recorded as having a copper ADSL service but actually has an already-migrated FTTC or FTTH service from a previous programme. These addresses should not be in the copper withdrawal programme at all — but if the inventory doesn’t reflect their actual service type, they’ll be enrolled anyway.

None of these errors are exotic. They’re completely normal consequences of running a network that changes continuously over many years. The problem is that withdrawal programmes amplify the impact of every inventory error — because you’re taking irreversible actions based on what the records say.

What the programme looks like when it’s working

It’s worth describing the positive case, because it’s genuinely achievable and the contrast with the failure modes is instructive.

When a withdrawal programme is running well, the address status in the OSS is the single authoritative record of where every property in scope sits in the migration lifecycle. Every system that touches orders — internal sales tools, OLO portals, API-based ordering from BSS partners — checks that status before accepting an order. The check takes milliseconds. The engineer never turns up to nothing.

The notification programme runs automatically off the address status. When an address moves to “withdrawal pending,” the customer notification is triggered. The notification is logged. If there’s no response within the regulatory window, the next step in the process is automatically flagged. Nothing falls through the gaps because nothing depends on a person remembering to do something.

When the programme closes out for a zone, the regulator gets a report that was generated automatically from the system records — not compiled from emails and spreadsheets the night before the submission deadline. Every notification, every address transition, every exception and its authorisation, all there.

And the order blocks persist. Long after the programme teams have moved on to the next project, the system still knows that copper is gone from those addresses. It doesn’t matter that the product still exists in the billing catalogue. It doesn’t matter that a new member of the sales team doesn’t know the history. The answer is still no.

One thing most operators get wrong about timing

The most common mistake we see is operators treating the OSS configuration as something to sort out once the programme has started — after the zone is defined, after the announcement, sometimes after the first notifications have already gone out.

By that point, you’re already behind. The inventory audit should have happened before the announcement. The order blocking should be live before the first address moves to “migration in progress.” The OLO notifications should be configured before the first wholesale customer is affected.

There’s a pressure to announce programmes early — regulatory requirements, commercial commitments, competitive positioning. That pressure is real. But the OSS readiness needs to keep pace with the announcement, not trail it by several months. The gap between “we’ve announced the programme” and “our systems can actually manage it” is exactly where the problems accumulate.